Iflexion delivered an MVP version of a custom AR app that simulates the physical presence of two callers in video calls.

AR App for Superimposed Video Calling

Context

Our customer, an Asia-Pacific startup, had the idea of a mobile video calling application that would use augmented reality to superimpose elements of two callers’ videos together, and thus simulate the physical presence of two callers in video calls.

As a startup seeking funding, the customer needed an MVP version of the app to demonstrate to potential investors. The customer ordered MVP development from third-party developers, but the resulting prototype didn’t work the way it was expected.

The customer wanted to restart the AR project and redevelop the existing MVP in 3 months so that the basic functionality was ready to be pitched on Nexus 6P and Google Pixel XL smartphones as well as Nexus 9 and Google Pixel C tablets. They approached Iflexion relying on our experience in augmented reality development and successful MVPs delivery.

Solution

Having started the AR project, we proposed to analyze the existing prototype first, assuming that there might be issues with the algorithms in its core. The analysis proved this assumption was correct, so we offered to proceed with research and development activities. We moved forward from one working hypothesis to another, implementing, testing, and adjusting the algorithm accordingly to develop a working MVP of a real-time object recognition system.

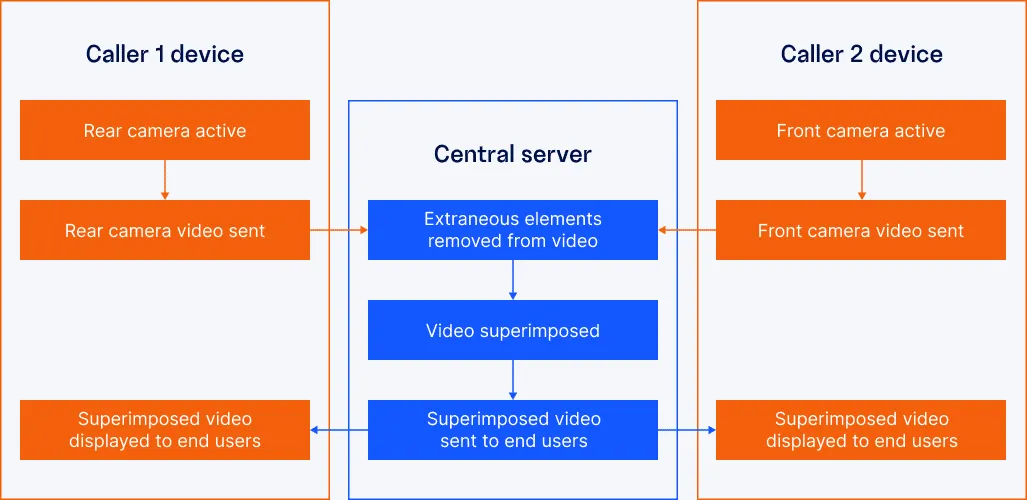

The MVP we delivered allows for streaming videos, viewing the superimposed video, and configuring rear camera video stream parameters in the real time. Users start a video call and capture themselves using the cameras of their mobile devices. The central server gets two video streams from mobile applications, removes extraneous video elements, overlays video streams, and returns the mixed stream to end users’ mobile devices.

Technologies & Tools

The delivered MVP is a client-server mobile solution. We developed the server application using C and C++, while the mobile client was built with Java.

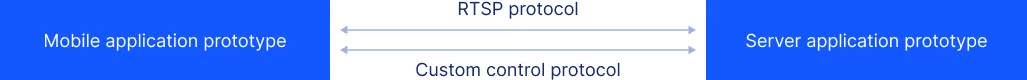

The mobile and server parts communicate through a custom control protocol, which processes control commands and tracks statuses, and a Real Time Streaming Protocol (RTSP), which passes streaming video from the mobile to the server application and vice versa.

Server App Prototype

To implement the real-time video recognition and streaming functionality in line with the AR project specification, Iflexion extended the in-built options of OpenCV and GStreamer open-source multimedia frameworks.

- OpenCV: Our experts improved OpenCV object detection and motion tracking features, as well as implemented automated background image improvement in low or changing light conditions.

- GStreamer: After the investigation, we enhanced the Real Time Streaming Protocol (RTSP) and decreased streaming latency from 15 to 1.5 seconds amidst 1280x720 video resolution over 5Ghz Wi-Fi.

Mobile App Prototype

Our team used libVLC to display videos in the application and Libstreaming—for real-time camera streaming. In order to speed up video stream encoding and decoding, we used hardware acceleration.

Development Process

Technology Audit

We started the AR project by testing the existing MVP and found several pitfalls in the original algorithm. The algorithm couldn`t remove a shadow from the object and converted an RGB image to grayscale. It detected a hand only on homogeneous and contrast background. Moreover, the recognition area was only 120x120 pixels. Based on static gesture recognition, the algorithm could not track the object motion.

R&D

To improve the existing recognition algorithm, we reviewed computer vision technologies available on the market and found that the OpenCV library compensated for the major recognition pitfalls existing in the MVP. With its in-built features, the solution could detect and mask an object properly on a solid color background: it measured object control points, tracked them, and intercepted redundant colors that didn’t conform to the values. Additionally, the problem of grayscale colors and recognition area cuts could be resolved.

Despite the merits, OpenCV library didn’t solve the problem of motion detection and tracking. In case of sudden moves or background changes, the object disappeared. The recognition quality dropped when lighting conditions and shooting angles changed.

To address the issue, we extended the OpenCV code with the following features:

- HSV and CMYK color models to improve recognition quality in poor or dynamic lighting conditions and non-uniform background.

- Gradient-based adaptive thresholding—dynamic approximation of the control points color values to better separate objects of interest from the background.

- Mixing of close color values in unrecognized areas inside and near the object contour to minimize the defects.

Stabilization

One of the major tasks within the AR project was to make the solution available on Nexus 6P and Google Pixel XL smartphones as well as Nexus 9 and Google Pixel C tablets. We conducted tests of the MVP using an exploratory method. Our team connected to the customers’ devices, tracked the event logs and fixed the algorithm in real time.

The list of problems we faced included inaccurate object masking, high transmission latency, and dark video on Google Pixel screens. We managed to fix all camera issues and were ready to deploy the app within the predicted deadline.

Results

We improved the customer`s video calling application prototype within the time limit prescribed for this AR project. In three months, we developed the MVP, implemented transmission of video streams, and verified the correct functioning of the system prototype.

Satisfied with Iflexion’s contribution, the customer headed to conquer potential investors and demonstrate the MVP to raise funds for a full-fledged video calling app.